Latest Update: Improved Code, Collisions and Hose

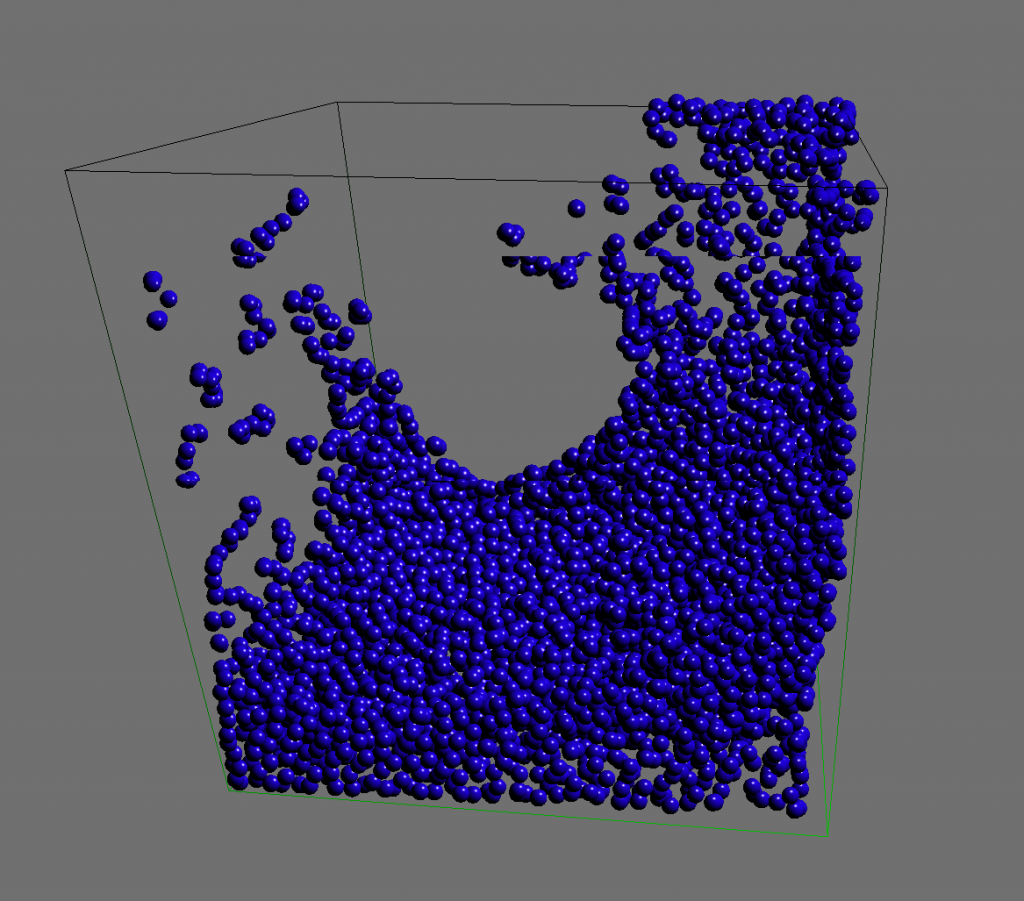

Gentlemen, behold! (Ladies too). At last I have something to show! My advisor and I have finally gotten to a point in our implementation of our Real Time Particle System that it can see the light of day. We have been working on an OpenCL implementation of the SPH method for simulating real time fluids. At this point it looks pretty good, which is the scientific way of saying there is still a lot of work to do. Take a look at the video to see it in action inside the Blender Game Engine!

Particles in BGE: Real-Time Fluids with OpenCL from Ian Johnson on Vimeo.

The rest of this post will be a quite technical discussion of what’s been done and what still needs to be done. If you just came for the demo and pretty pictures, reading any further puts you at risk of entering an extreme state of geekiness which can be quite disorienting and uncomfortable for those unaccustomed to the high.

The Project

So this project is currently split into two parts, the RTPS library and my modifications to the Blender Game Engine. The RTPS (Real Time Particle System) library has a standalone viewer for development and testing and serves as a limited example for the API. My Blender modifications are my hackish attempts to link against the library and provide an interface. One nice thing is that the library is compiled as a shared library meaning we can make modifications to it without recompiling Blender. I say hackish because I’m using a mix of a custom modifier and game properties to pass necessary information to the RTPS instance. Really I should have my own Particle System object, a separate Domain object and proper python hooks so that properties of the system could be dynamically changed by scripts and actuators. Luckily Blender has a great community and I’d like to shout out to Moguri for his help so far!

How Fast is Real Time?

So most gamers know about FPS (frames per second) being important to their experience, and the higher the number the better. A reasonable FPS for an interactive 3D game is 60fps, but 30fps can be deemed acceptable, and movies play back at 24fps. In the computational sciences we measure things in terms of milliseconds, so if you can do 60 frames per second it takes you 17ms to make one frame, or at 30fps it takes 33ms to make one frame. So we don’t have a lot of time to work with when building frames, not only do we need to compute the new position for all the fluid particles in that time, but also draw them and handle any of the rest of the stuff going on in the game engine!

So how fast are we going now? First it’s important to talk about what machine we are running on. Currently I have timings for a ’09 MacBook Pro which has an NVIDIA GeForce 9400M, a Dell T7500 with an ATI FirePro V7800 and a Dell T7500 with an NVIDIA GTX480.

The MacBook Pro is using Apple’s OpenCL drivers, this having the least powerful GPU we expect it to be the slowest. The two Dells are running Ubuntu 10.04, the one with the ATI card is running the ATI Stream SDK 2.2 and the one with the NVIDIA card is running the 260.24 driver. We recently obtained the ATI card, and haven’t had enough time to test things thoroughly.

The following timings are for the update loop in the RTPS library (thus all of the calls to OpenCL to update the positions of the particles based on the SPH method). This does not include rendering.

| Card | 4096 Particles | 8192 Particles | 16384 Particles |

| 9400M | 25ms | 42ms | 78ms |

| ATI FirePro V7800 | 7.5ms | 8ms | 8ms |

| NVIDIA GTX480 | 2ms | 2.5ms | 4.8ms |

So right off the bat we see that the MacBook Pro is already too slow for desirable frame rates. The two powerful cards perform quite well however, and you may notice something strange about the ATI card, the timings stay the same!

One explanation that explains these timings well (besides the timings being completely wrong ;) is that the expensive part of this algorithm is the neighborhood calculations (update each particle’s force by doing calculations on all the particles around it). This requires lots of memory lookups which are expensive on the GPU. The 9400M doesn’t actually have onboard RAM so it has to go all the way to the CPU’s main memory to do those accesses. On the other end of the spectrum, the GTX480 has advanced caching mechanisms which handle our non-optimal implementation quite nicely. More testing is necessary on the ATI but it is my theory that the memory latency is completely hiding the computational cost of the neighboring routines.

We plan to implement more efficient data structures and algorithms to gain what we hope will be large speedups for older GPUs and perhaps the ATI as well.

As a word of warning, with our current setup I’ve been getting some nasty crashes with the ATI card, but we suspect it has something to do with all the duct-tape.

What’s Next?

My first priority is on improving the UI for the RTPS library in Blender. This includes integrating more properly, making more parameters accessible both during setup and at runtime. I want to give Python access so that particles can be dynamically emitted in many more ways. Collision detection should be coming back soon too! My advisor is interested in increasing the efficiency and accuracy of the SPH implementation. One of my fellow students, Andrew Young is working on a way to extract the surface so we can do some pretty rendering and he is also interested in a multi-GPU implementation.

Thanks

I’d like to thank:

- my advisor Dr. Gordon Erlebacher for always pushing me to go bigger and better (also for writing a bunch of the SPH code!)

- my groupmates Evan Bollig, Myrna Merced and Andrew Young

- the Department of Scientific Computing at Florida State University

- the FSU Visualization Lab

- the Blender community with special shoutouts to Moguri, dfelinto, Roman and mindrones

- Krog for his CUDA SPH implementation

- my partner in mathematical crime Nathan Crock

Pingback: Tweets that mention Particles in BGE: Fluids in Real Time with OpenCL | enj -- Topsy.com

Excellent post Ian. I just looked at the video. May I suggest a setting where the hose emits continuously, perhaps with random emission? Imagine imitating a real hose. I like it! Keep it up. Gordon.

Sooo you use Blender for visualization and input control? I’ve never really used Blender is it much work to integrate something like this simulation into Blender?

Pretty cool stuff, I wish I got to play with sweet graphics.

yep, when you work in Blender a lot, you need lots of Starbucks :D

Nice Ian! At least 3 “Grandes”worth and several long nights until 4:00am. ;-)

Did you base this on the CUDA samples?

That surface guy should get a move on, I want to see some slick surface renderings in real time! ^_^

!!!! thanks for sharing the impressive developments. I hope this makes it into trunk someday soon, cant wait to test it out.

Pingback: Real Time Fluid Simulation OpenCL 显卡硬件加速 -

Pingback: Fluids in Real Time with OpenCL | BlenderNation

OpenCL SPH in Blender? Really great work, dude! Hope this makes it into trunk…

Congrats on the accomplishment. It’s exciting news to hear this in the new year.

Is this possible outside of the BGE? Say… Particles System with OpenCL.

Are you using Bullet MiniCL?

Just a quick question:

Is this only for BGE, or will it also be available for inside blender?

@Whimsy it will eventually be possible outside the BGE, I hope to converge with the existing Particle System as much as possible. The focus of my masters is to make this a tool for educational games so it won’t be my priority. I am constantly learning and working towards tighter integration with Blender so I hope it will get there :)

I am not currently using Bullet MiniCL, I wrote my own interface so I could learn the fundamentals. There is a definite need for a cross-platform OpenCL interface in consumer grade open source software, there are too many variables and options for one person to try and handle. I will take a look at integrating with Bullet better when my code is cleaner and I understand it’s APIs better!

Pingback: Real-Time Fluids with OpenCL in Blender Game Engine - 3D Tech News, Pixel Hacking, Data Visualization and 3D Programming - Geeks3D.com

Very nice!

What brand monitor do you use?

Pingback: Particle Fluids in Real Time with OpenCL « Technology

Pingback: Particles in BGE: Real-Time Fluids with OpenCL | RockThe3D

Nice work!

Any chance we can get hold of the branch and/or patches?

Particles.

Would you be able to look into Grass / Hair particles?

The GE really lacks Grass, ask anyone!

hair, not so much, but i presume would work in a relatively similar way.

Because most of the properties in the GE are defined in the Logic or the Physics tab, i think add as an actuator!

that way, you can enable/disable it in the GE via python.

From there make the settings on the actuator accessible via python, e.g

import bge

controller = bge.logic.getCurrentController()

particle = controller.actuators[“particle”]

particle.length = 3.0

particle.emit = 50

etc….

Pingback: sleeping on the edge, hope i don’t toss and turn | enj

Wow, that s some impressive work. What’s the possibility of you later attempting to integrate your library with a preexisting game engine, such as cube 2? Dynamic fluid effects would be an amazing addition to modern games. I understand that it Probably has it’s own interfaces and dependencies, and would be a difficult project, but you have already created quite an effect.

Pingback: Hijacking the BGE for Fun and Particles | enj

This is soo awesome! Could we get an update on how it’s going and maybe an approximate time frame of when we could see this? That would be great. :)

Pingback: LBL Summer Research: Report 1 | enj

How do you finish this part: press one button, and then particles come out? Thank you for you patience.